Every time a plane flies over my house, I want to know what it is. Flight radar apps exist, but pulling out my phone, opening an app, and hunting for the aircraft is more friction than the curiosity warrants. I wanted to just ask. So I built a Claude Agent Skill that makes that possible.

ADS-B: The Radio Signal Every Plane Broadcasts

Most commercial and general aviation aircraft continuously broadcast their position, altitude, speed, and identifier over a radio protocol called ADS-B (Automatic Dependent Surveillance-Broadcast). The FAA mandated it for most airspace in 2020. The practical result is that the sky is full of readable signals, and a whole industry of enthusiasts has grown up around receiving them.

Several services aggregate this data and expose it through free APIs. I used adsb.lol, which covers most of North America and returns a clean JSON feed of aircraft within a radius of any coordinate.

Building the Skill

Claude has a feature called Skills: short configuration files that tell it how to execute a task, what tools to use, and how to present the result. Think of it as a lightweight macro system. A skill is a markdown file with a description (used to trigger it) and instructions (used to execute it).

The ADS-B skill is simple. When I ask anything like "what's overhead?" or "what plane is that?", Claude runs a small Python script against the adsb.lol API, centered on my home coordinates. The script returns aircraft sorted by distance. Claude leads with the closest one and offers to share the rest.

The skill file looks roughly like this:

The Python script itself is less than 100 lines of Python – Also written by Claude. No dependencies, no auth, no infrastructure. The whole skill took about 15-20 minutes to build.

The Interaction

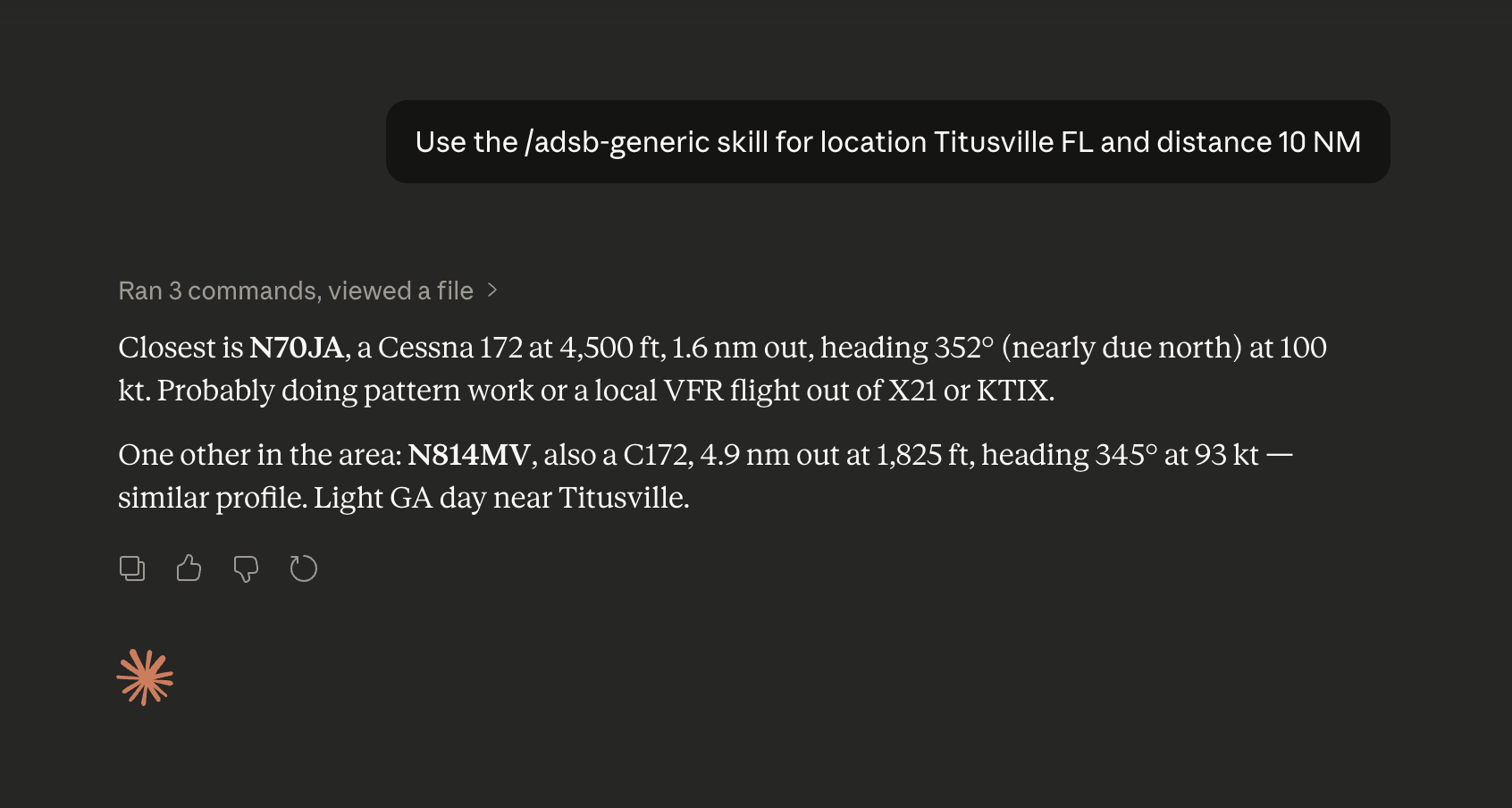

I asked: "Use my adsb skill and tell me what plane is overhead?"

Claude ran the script and came back (actual data changed for privacy):

The closest aircraft right now is AAL1337, an Airbus A319 at 4,200 ft, 1.1 nm out, heading southwest at 210 kt. Probably on approach to CMH. There are 4 others in the area — want details?

That's the whole trick. No app, no manual lookup. A question about the physical world, answered in context, in the moment.

Try it out

I made a location-generic version. You can download it here and try it.

What Else Can I Connect?

Most AI assistant interactions are context-free: you bring the data, the model reasons over it. Skills that pull live external data change that dynamic. The model reaches out, samples the world, and returns an answer grounded in your actual context.

ADS-B happens to be free, unauthenticated, and globally available, which made it an easy first target. But the same pattern applies to anything with an API: local weather, service health, traffic, sensor readings. The question worth asking is what other real-world signals are this easy to connect.